Rebecca Quintana, Learning Experience Design Lead

@rebquintana

Yuanru Tan, Learning Experience Designer for Accessibility

@YuanruTan

Wenfei Yan, Data Science Fellow

Reflection on Cross-Platform Comparison Project

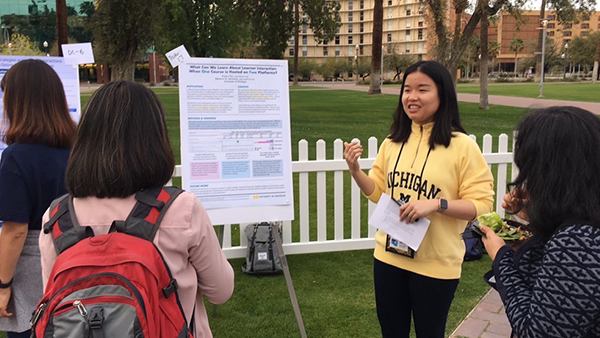

Yuanru Tan and Rebecca Quintana are Learning Experience Designers (LXDs) and researchers in the Office of Academic Innovation. Last summer, they embarked on a learning analytics project to investigate discussion forum interactions in Massive Open Online Courses (MOOCs), looking at data from one course that was hosted on two different platforms. Their submission titled “What Can We Learn About Learner Interaction When One Course is Hosted on Two MOOC Platforms?” was accepted as a poster at the 9th International Learning Analytics & Knowledge (LAK) Conference in Tempe, Arizona. By conducting a social network analysis using MOOC discussion forum data from a single data science ethics course that ran concurrently on two different MOOC platforms (Coursera and edX), they identified higher network connectedness and network centralization on the edX platform and lower cohesion within the Coursera network as a whole.

This work illustrated how technical features of MOOC platforms may impact social interaction and the formation of learner networks. As LXDs working within the Office of Academic Innovation where they partner with the two leading online education platforms (i.e., Coursera and edX), this preliminary work on MOOC discussion forums enables them to think further about the factors that foster interaction amongst learners in online discussion forums. For instance, on edX, pre-existing posts are visible to learners before they respond to a prompt, so that learners have the choice to react to historic posts besides posting their own thoughts; while on Coursera, learners must respond to the prompt without seeing historic posts. This may account for the higher participation rate on Coursera, though a reduction in learner-to-learner interaction. In their research they ask questions like,“How can we take advantage of different platform features to design engaging online learning experiences?” Yuanru had the opportunity to share their work in one of the most prominent learning analytics communities. This experience left Yuanru and Rebecca with tremendous insights and a desire to continue to investigate these important questions in future work.

LAK Reflections & Takeaways

Reflections from Yuanru Tan, Learning Experience Designer for Accessibility – With 525 unique attendees and 900+ participants over the 5 days in pre-conference workshops and the main conference, LAK 2019 was the largest in its 9-year history. As a first-time LAK attendee, I was fascinated by the interdisciplinary experience one could have at LAK. In the pre-conference workshop section, I attended a machine learning workshop hosted by Dr. Erkan Er, a postdoctoral researcher at the University of Valladolid, Spain. Together with about 20 participants, we spent an afternoon exploring the topic of How to Generate Actionable Predictions on Student Engagement with Python Scikit-Learn. Dr. Erkan Er delivered an informative session to introduce the machine learning approaches for creating actionable predictions (i.e., in-situ learning and transferring across courses) that can offer many utilities for designing real-world interventions. This hands-on workshop also provided a space for the participants to reflect on their own experience building predictive models and share their opinions on the future use of these approaches in research and practice.

In the main-conference section, there were so many great and concurrent sessions/talks. I struggled to come up with an optimal solution to attend all the sessions I was most interested in. Several talks were extremely intriguing to me. Weijie Jiang and Zach Pardos from the University of California Berkeley developed a recurrent neural network-based course recommendation system according to students’ target courses of interest, estimated prior knowledge, and zone of proximal development; Abelardo Pardo from the University of South Australia shared his team’s progress on understanding both students self-reported self-efficacy and cognitive load data and the trace-based measures for the two constructs detected by the system. Furthermore, as a University of Michigan alumna working at the Office of Academic Innovation, I was so proud to see that U-M has such a strong presence at LAK and our office also has such a supportive role in some of the research presented by the U-M cohort, e.g., Social Comparison in MOOCs: Perceived SES, Opinion, and Message Formality presented by Heeryung Choi, Beyond A/B Testing: Sequential Randomization for Developing Interventions in Scaled Digital Learning Environments presented by Timothy Necamp.

Reflections from Wenfei Yan,Data Science Fellow – It was an awesome first-time conference experience for me at LAK. The atmosphere was as warm and welcoming as the weather inTempe. I was deeply impressed by insightful keynote speeches. I especially liked the 5th challenge for the learning analytics field presented by Ryan Baker from the University of Pennsylvania which is the generalizability of the models. As a student currently studying data science, I have learned in every course that the generalization of a model is critical, but requires efforts to achieve. Considering the breadth of the learning analytics applications, I believe the model generalization is both challenging and useful in this field.

In the Dialogue & Engagement session on Wednesday, I presented a case study on the Privacy, Reputation, and Identity in a Digital Age Teach-Out, which explores the learner engagement pattern in Teach-outs. Learn more about the Teach-Out Series on Michigan Online. Understanding learning behaviors in the discussion forum is particularly crucial for the development of Teach-Outs, as they do not include formal assessments by design. As such, we utilized unsupervised Natural language processing (NLP) techniques to extract information from learner dialogue, and came up with the following interesting findings:

- Learner discussion topics were generally very related to the Teach-Out content. Further, there were no extremely on-topic or off-topic posts, which could indicate that learners were discussing on-topic concepts while incorporating their own experiences. This would be a desirable sign considering the design goal of Teach-Outs.

- There were more positive emotions than negative emotions in the discussion forum. However, due to the topic of this specific Teach-Out, on-topic posts were actually more negative, as they mostly expressed concerns about the state of privacy in a digital era.

This was a very first pilot study on the Teach-Out series, which hopefully enabled us to know more about learner engagement during a Teach-Out.

At the Office of Academic Innovation, we have been actively probing into ways for understanding online education. It was a very inspiring experience attending LAK conference and sharing our work with the community of experts from interdisciplinary fields. We are looking forward to exploring further in the future!